About

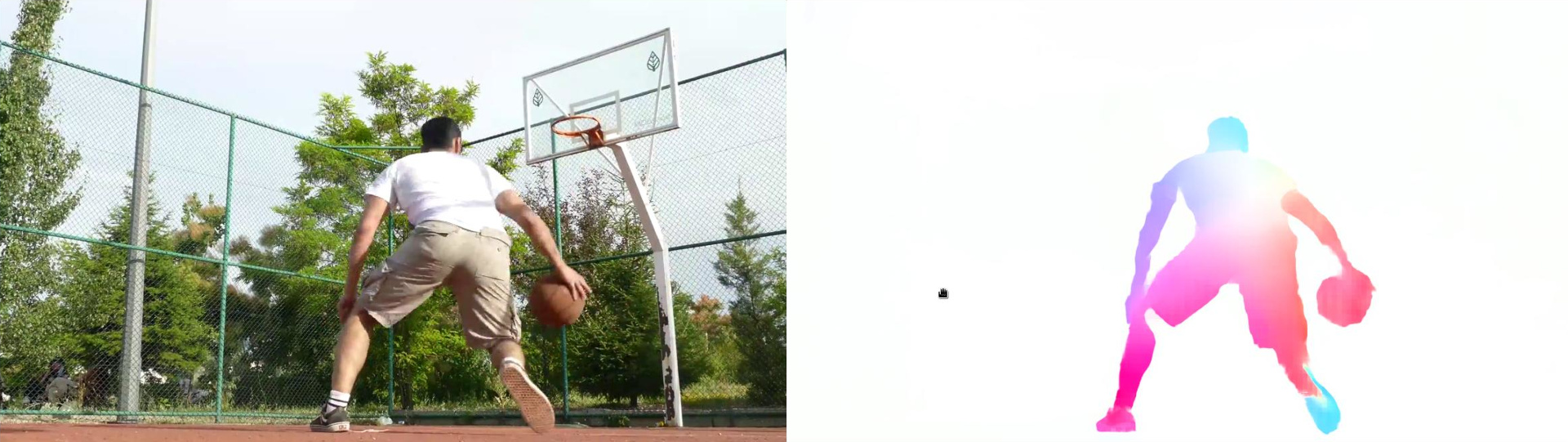

Estimate the optical flow from a video using a RAFT model.

Run RAFT optical flow algorithm.

Estimate per-pixel motion between two consecutive frames with a RAFT model which is a composition of CNN and RNN. Models are trained with the Sintel dataset

🚀 Use with Ikomia API

1. Install Ikomia API

We strongly recommend using a virtual environment. If you're not sure where to start, we offer a tutorial here.

2. Create your workflow

☀️ Use with Ikomia Studio

Ikomia Studio offers a friendly UI with the same features as the API.

-

If you haven't started using Ikomia Studio yet, download and install it from this page.

-

For additional guidance on getting started with Ikomia Studio, check out this blog post.

📝 Set algorithm parameters

- small (bool, default=True): True to use small model (faster), False to use large model (slower, better quality).

- cuda (bool, default=True): CUDA acceleration if True, run on CPU otherwise.

🔍 Explore algorithm outputs

Every algorithm produces specific outputs, yet they can be explored them the same way using the Ikomia API. For a more in-depth understanding of managing algorithm outputs, please refer to the documentation.

RAFT algorithm generates 1 output:

- Optical flow image (CImageIO)

Developer

Ikomia

License

BSD 3-Clause "New" or "Revised" License

A permissive license similar to the BSD 2-Clause License, but with a 3rd clause that prohibits others from using the name of the copyright holder or its contributors to promote derived products without written consent.

| Permissions | Conditions | Limitations |

|---|---|---|

Commercial use | License and copyright notice | Liability |

Modification | Warranty | |

Distribution | ||

Private use |

This is not legal advice: this description is for informational purposes only and does not constitute the license itself. Provided by choosealicense.com.